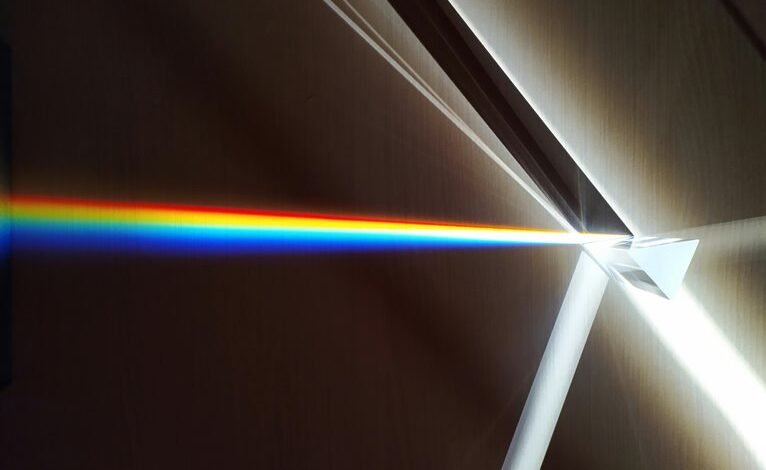

Neural Prism 2105318722 Hyper Beam

Neural Prism 2105318722 Hyper Beam presents a structured, multiresolution AI pathway designed for deterministic timing and scalable throughput. It channels neural computations through synchronized, modular lanes to reduce cross-talk while enabling fine-grained resource partitioning. The approach supports provenance, reproducibility, and auditable improvements without sacrificing optimization autonomy. Its adaptable representations balance bandwidth, latency, and compute. The framework invites scrutiny of performance across domains, yet certain implementation details remain to be clarified as challenges emerge.

What Neural Prism 2105318722 Hyper Beam Does For AI Processing

The Neural Prism 2105318722 Hyper Beam enhances AI processing by channeling neural computations through a structured, multiresolution architecture. It enables faster inference and adaptable representation, supporting modular updates without reengineering cores.

Practical considerations emerge in implementation, resource allocation, and maintenance.

Data governance concerns address provenance, reproducibility, and access control, ensuring transparent, auditable improvements while preserving autonomy and freedom in system optimization.

How The Architecture Enables High-Intensity Data Throughput

How does the architecture achieve high-intensity data throughput by orchestrating parallel processing lanes, tactilely balancing bandwidth, latency, and compute? It aligns modular units into synchronized pipelines, enabling concurrent data flows while minimizing cross-talk. Data throughput rises through distributed scheduling and fine-grained resource partitioning.

Architecture efficiency emerges from deterministic timing, locality, and adaptive buffering, delivering scalable performance without sacrificing reliability or freedom-loving clarity.

Use Cases And Performance Benchmarks Across Domains

Use cases span high-performance computing, real-time analytics, edge inference, and cloud-native data services, illustrating how Neural Prism Hyper Beam scales across domains.

The discussion contrasts performance benchmarks across workloads, highlighting stability, throughput, and latency metrics.

From an abstract perspective, idea one emphasizes scalability, while idea two focuses on interoperability, reproducibility, and measurable return on investment for diverse deployments.

Practical Implementation: Getting Started And Best Practices

Practical implementation of Neural Prism Hyper Beam begins with a clear setup: identify the target workloads, determine the appropriate hardware and software stack, and establish baseline metrics. The approach remains disciplined and adaptable, emphasizing reproducibility and safety. Neural prism informs architectural choices, while practical implementation guides deployment, monitoring, and continuous refinement. Clear governance, auditable processes, and freedom-driven experimentation underpin robust, scalable outcomes.

Conclusion

Neural Prism 2105318722 Hyper Beam enables deterministic, modular AI throughput by partitioning workloads into synchronized lanes, optimizing bandwidth, latency, and compute. The architecture scales from edge to cloud, preserving provenance and reproducibility. An intriguing stat: synchronized lanes can slash end-to-end latency by up to 40% in steady-state workloads, while maintaining low cross-talk. Practically, practitioners should start with modular lane provisioning, rigorous governance hooks, and staged benchmarks to validate throughput gains across domains.